Configure an mlmodel detector or classifier

The mlmodel vision service wraps a deployed ML model and exposes it through the standard vision service API. At startup, the service reads the model’s tensor metadata and decides which of three roles the model can fulfill: classifier, detector, or 3D segmenter. It registers every role the model supports.

Prerequisites

Before configuring an mlmodel vision service, you need:

1. A trained or uploaded ML model

Add an existing model from the registry or train one from your data. The model must be TensorFlow Lite, TensorFlow, ONNX, or PyTorch.

2. An ML model service running on your machine

Configure an ML model service with an implementation that matches your model format (for example, tflite_cpu, onnx-cpu, tensorflow-cpu, or torch-cpu).

Configure

- Navigate to the CONFIGURE tab of your machine’s page.

- Click the + icon next to your machine part in the left-hand menu and select Configuration block.

- In the search field, type

visionormlmodeland select thevision/mlmodelresult. - Click Add component, enter a name for your service, and click Add component again to confirm.

- In the ML MODEL section, select the ML model service your model is deployed on.

- In the DEFAULT CAMERA section, select the camera the service should use by default for calls such as

GetDetectionsFromCamera. - Adjust other attributes in the attributes table as applicable.

Add the vision service object to the services array in your JSON configuration:

"services": [

{

"name": "<service_name>",

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "<mlmodel-service-name>",

"camera_name": "<camera-name>"

}

}

]

"services": [

{

"name": "person_detector",

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "my_mlmodel_service",

"camera_name": "camera-1",

"default_minimum_confidence": 0.6

}

}

]

"services": [

{

"name": "fruit_classifier",

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "fruit_classifier",

"camera_name": "camera-1"

}

}

]

Attributes

| Attribute | Type | Required? | Description |

|---|---|---|---|

mlmodel_name | string | Required | The name of the ML model service the vision service wraps. |

camera_name | string | Optional | The default camera to use for calls such as GetDetectionsFromCamera, GetClassificationsFromCamera, and GetObjectPointClouds. |

default_minimum_confidence | number | Optional | Minimum confidence score (between 0.0 and 1.0) applied to all output labels. Detections and classifications below this are filtered out. If unset, no filtering is applied.Example: 0.6 |

label_confidences | object | Optional | Per-label confidence thresholds. Keys are label names and values are minimum confidence. When set, label_confidences overrides default_minimum_confidence for listed labels and other labels are filtered out.Example: {"DOG": 0.8, "CARROT": 0.3} |

label_path | string | Optional | Path to a labels file. Overrides the label file specified in the ML model service. The file is one label per line; line number (zero-indexed) is the class ID. |

remap_input_names | object | Optional | Map model input tensor names to the names the vision service expects. The service expects image for the input tensor. See Tensor name requirements. |

remap_output_names | object | Optional | Map model output tensor names to the names the vision service expects (location, category, score for detectors; probability for classifiers). See Tensor name requirements. |

xmin_ymin_xmax_ymax_order | array of int | Optional | Four-entry permutation indicating the order in which the model outputs bounding box coordinates. Use [0, 1, 2, 3] when the model outputs [xmin, ymin, xmax, ymax]. Use [1, 0, 3, 2] when the model outputs [ymin, xmin, ymax, xmax]. Common source of shifted or mirrored detections when using custom YOLO variants. |

input_image_mean_value | array of float | Optional | Per-channel mean values subtracted from each pixel before inference. Requires at least 3 values, one per color channel. Set this only when the model was trained with non-default input normalization. If unset, no mean subtraction is applied. Example: [127.5, 127.5, 127.5] |

input_image_std_dev | array of float | Optional | Per-channel standard deviation values. Each pixel is divided by this after mean subtraction. Requires at least 3 values, all non-zero. Set this only when the model was trained with non-default input normalization. If unset, no division is applied. Example: [127.5, 127.5, 127.5] |

input_image_bgr | bool | Optional | Set to true if the model expects BGR channel order instead of RGB. If detections have wrong colors or all labels appear at once, try flipping this.Default: false |

Tensor name requirements

The vision service expects specific tensor names from the wrapped ML model:

| Service role | Input tensor | Output tensors |

|---|---|---|

| Detector | image | location, category, score |

| Classifier | image | probability |

If your model uses different tensor names, set remap_input_names and remap_output_names to bridge them:

{

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "my_model",

"remap_input_names": {

"my_model_input_tensor1": "image"

},

"remap_output_names": {

"my_model_output_tensor1": "category",

"my_model_output_tensor2": "location",

"my_model_output_tensor3": "score"

},

"camera_name": "camera-1"

},

"name": "my-vision-service"

}

{

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "my_model",

"remap_input_names": {

"my_model_input_tensor1": "image"

},

"remap_output_names": {

"my_model_output_tensor1": "probability"

},

"camera_name": "camera-1"

},

"name": "my-vision-service"

}

If a Viam-trained model already uses these names, you can skip remap_input_names and remap_output_names entirely.

Test your detector or classifier

Test an mlmodel vision service from the Control tab, with images in the cloud, or with code.

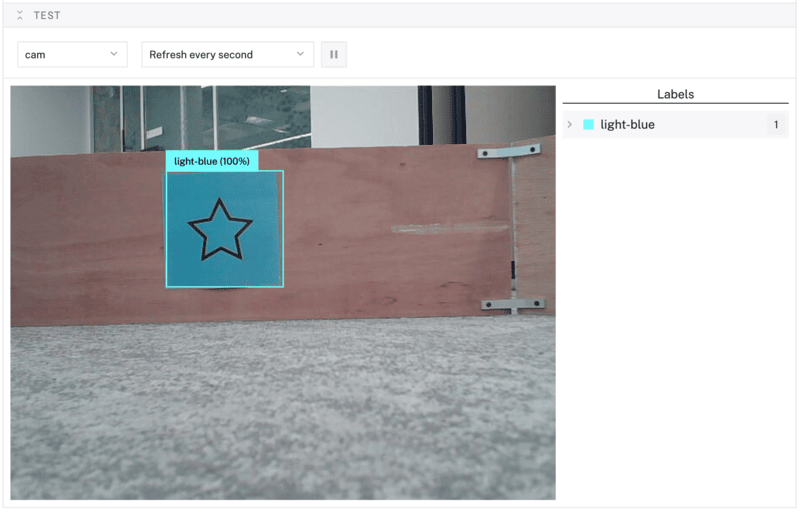

Live camera footage

- Open your machine in the Viam app and click the vision service’s Test area, or navigate to the CONTROL tab and select the vision service.

- In the Camera dropdown, select the camera whose feed you want the vision service to run on. Detections above

default_minimum_confidenceappear as bounding boxes on the live camera feed and refresh automatically.

If you want a continuous overlay in the Control tab, configure a transform camera:

{

"pipeline": [

{

"type": "detections",

"attributes": {

"confidence_threshold": 0.5,

"detector_name": "<vision-service-name>",

"valid_labels": ["<label>"]

}

}

],

"source": "<camera-name>"

}

{

"pipeline": [

{

"type": "classifications",

"attributes": {

"confidence_threshold": 0.5,

"classifier_name": "<vision-service-name>",

"max_classifications": 5,

"valid_labels": ["<label>"]

}

}

],

"source": "<camera-name>"

}

Images in the cloud

If you have images stored in the Viam Cloud, you can run your classifier against them:

- Navigate to the DATA tab and click an image to open the expanded view.

- Click the Auto-prediction mode icon in the image toolbar (or press

M). - In the Run model panel, click Choose ML model, pick your model and version, then click Run.

Code

The following examples get detections or classifications from a camera. Replace "camera-1" with the name of the camera you configured.

from viam.components.camera import Camera

from viam.services.vision import VisionClient

robot = await connect()

camera_name = "camera-1"

cam = Camera.from_robot(robot, camera_name)

my_detector = VisionClient.from_robot(robot, "my_detector")

# Get detections from the camera in one call

detections = await my_detector.get_detections_from_camera(camera_name)

# Or capture an image first, then run detections on it

images, _ = await cam.get_images()

img = images[0]

detections_from_image = await my_detector.get_detections(img)

await robot.close()

import (

"go.viam.com/rdk/components/camera"

"go.viam.com/rdk/services/vision"

)

cameraName := "camera-1"

myCam, err := camera.FromProvider(machine, cameraName)

if err != nil {

logger.Fatalf("cannot get camera: %v", err)

}

myDetector, err := vision.FromProvider(machine, "my_detector")

if err != nil {

logger.Fatalf("cannot get vision service: %v", err)

}

// Get detections from the camera in one call

detections, err := myDetector.DetectionsFromCamera(context.Background(), cameraName, nil)

if err != nil {

logger.Fatalf("could not get detections: %v", err)

}

if len(detections) > 0 {

logger.Info(detections[0])

}

// Or capture an image first, then run detections on it

img, err := camera.DecodeImageFromCamera(context.Background(), myCam, nil, nil)

if err != nil {

logger.Fatalf("could not decode image from camera: %v", err)

}

detectionsFromImage, err := myDetector.Detections(context.Background(), img, nil)

if err != nil {

logger.Fatalf("could not get detections: %v", err)

}

if len(detectionsFromImage) > 0 {

logger.Info(detectionsFromImage[0])

}

from viam.components.camera import Camera

from viam.services.vision import VisionClient

robot = await connect()

camera_name = "camera-1"

cam = Camera.from_robot(robot, camera_name)

my_classifier = VisionClient.from_robot(robot, "my_classifier")

# Get the top 2 classifications from the camera in one call

classifications = await my_classifier.get_classifications_from_camera(

camera_name, 2)

# Or capture an image first, then run classifications on it

images, _ = await cam.get_images()

img = images[0]

classifications_from_image = await my_classifier.get_classifications(img, 2)

await robot.close()

import (

"go.viam.com/rdk/components/camera"

"go.viam.com/rdk/services/vision"

)

cameraName := "camera-1"

myCam, err := camera.FromProvider(machine, cameraName)

if err != nil {

logger.Fatalf("cannot get camera: %v", err)

}

myClassifier, err := vision.FromProvider(machine, "my_classifier")

if err != nil {

logger.Fatalf("cannot get vision service: %v", err)

}

// Get top 2 classifications from the camera in one call

classifications, err := myClassifier.ClassificationsFromCamera(context.Background(), cameraName, 2, nil)

if err != nil {

logger.Fatalf("could not get classifications: %v", err)

}

if len(classifications) > 0 {

logger.Info(classifications[0])

}

// Or capture an image first, then run classifications on it

img, err := camera.DecodeImageFromCamera(context.Background(), myCam, nil, nil)

if err != nil {

logger.Fatalf("could not decode image from camera: %v", err)

}

classificationsFromImage, err := myClassifier.Classifications(context.Background(), img, 2, nil)

if err != nil {

logger.Fatalf("could not get classifications: %v", err)

}

if len(classificationsFromImage) > 0 {

logger.Info(classificationsFromImage[0])

}

Tip

To fetch an image, detections, classifications, and point cloud objects in one round trip, use CaptureAllFromCamera. This is more efficient than separate calls and guarantees all results correspond to the same frame.

Troubleshooting

Next steps

Was this page helpful?

Glad to hear it! If you have any other feedback please let us know:

We're sorry about that. To help us improve, please tell us what we can do better:

Thank you!